Smooth runnings: Five Ways to Improve a Dev Team

Datadock Solutions was founded in New York City with one aim: to bring the deep and fast data analytics that large Financial Services houses use for pricing products, into the more soft-skills area of choosing who to trade with. By replacing anecdote and ‘sales feel’ with real-time analytics to improve longer term returns, the equity derivatives team can build an A-team of traders and brokers picked by performance metrics, not by guesswork and hearsay.

Almost in a reverse of Michael Lewis’s classic Moneyball, the world of application metrics is coming full circle: returning the number-crunching approach to picking hitters from baseball, back to the trading floor where it originated. The Datadock platform drives more rigorous, consistent and precise decisions about who’s likely to be a plodder and who might just hit it out of the park.

Kumaran Vijayakumar and Thomas Wadsworth, the two co-founders of Datadock Solutions, had twenty-two years of Wall Street trading and running equities derivatives teams between them when they got together and agreed there had to be a better way. They had a very good idea how big banks typically decide on where to place trades: it’s as much an emotional choice as anything else. They felt that by tracking and surfacing performance metrics over time, an analytics engine could help make those decisions more about look and listen instead of touch and feel.

From the hedge fund side, CTO Martin Adamec then brought his long experience as a data analyst and systems architect to Datadock. Right from the start he opted for MongoDB as the data management backend for Datadock, and Studio 3T as the development environment and toolset for interrogating that data. And along the way Martin has distilled out a few guiding principles that can help turn a sack of cats into a coding bobsleigh.

“We value most of all the fact that the whole team can work together in Studio 3T. It’s not just the software engineers and QA engineers, but account managers and sales analysts too who use it, as much as we do in the dev team."

– Martin Adamec, CTO, Datadock Solutions

1. Choose the best environment for the team

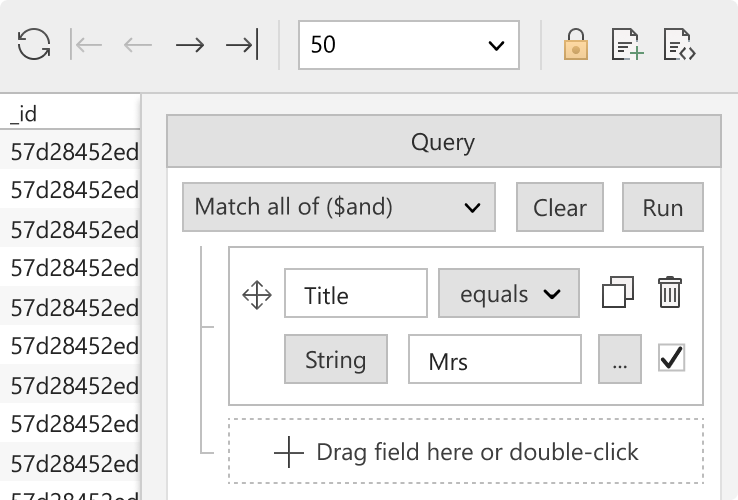

We value most of all the fact that the whole team can work together in Studio 3T,” Adamec explains. “It’s not just the software engineers and QA engineers, but account managers and sales analysts too who use it. In fact the non-programmers use it as much as we do in the dev team. The Visual Query Builder is kind of where we meet. For programmers it speeds up the writing of long and convoluted queries, ironing out typos and human error. For the non-programmers it works intuitively and requires no coding.”

The Datadock platform comprises a number of tools working in lockstep with the full readout dashboard, called CAMS™ (Customer Advanced Metrics Scorecard), where a range of performance metrics comes to life for each customer: revenue generated, average trade volumes, retention, commission and plenty more. For a deeper dive, Customer Intelligence™ then allows the user to explore individual trade histories and profile the causal roots of performance trends.

To drive CAMS realtime operation, Datadock uses the MongoDB document store for gathering the data used to build profiles of each of the hundreds of trading entities being compared. AI/ML python-based algorithms then perform intensive computational modeling, along with enhanced ETL for formatting the data outputs for visibility in MemSQL, the popular distributed relational database.

2. Make the data visible

“It’s why we have two different log streams,” explains Adamec, “The one for Data Processing logs is about data values and results. If we need to talk about anomalies with the client we can simply show them the relevant document. Studio 3T helps us identify and treat those cases where the results are outside the expected spread.”

“The second logging stream is all about how the program itself is behaving. Did it misfire because of a coding fault? Or is it because down the pipe comes a record we’ve not seen before? Maybe a combination of parameters and data entries that we never prepared for? It would be impractical to try to cover all edge cases before even encountering them. But spotting the oddities when they do show up, that is essential to us, and Studio 3T makes it easy to do.”

“Our developers, QA and everyone who touches the application during the process of development, verification and production, needs to be using Studio 3T. Not only from the perspective of the two logging workstreams, but also the metadata. Each client will have different idiosyncrasies. Sell-side, buy-side, and agencies who put those two together, and collect a margin over and above the commission. They’re all unique.”

“We love that we can simply copy-and-paste collections, and compare and sync up data between environments. We have also used Import/Export extensively.”

“At a conservative estimate our developers probably spend 15% – 20% of their time working on writing, running, and modifying queries. If we didn’t have Studio 3T it would probably take ten times longer to do those jobs.”

– Martin Adamec, CTO, Datadock Solutions

3. Find quicker ways to get more done

“In terms of impacting our operating costs, Studio 3T is a tremendous time-saver. Let’s say we had to do all of this work on the command line. Just imagine going through tens of thousands of logs, running those queries and modifying them. It’s very efficient to be able to change the specific conditions attached to a query. Being able to suppress temporarily one of the condition parameters, running the query again, checking the results, then replacing it to compare the difference. Doing work like that on the command line would take absolutely hours and hours. Impractically long hours

“Just looking through the MongoDB logs is very time consuming. You’re looking for something specific but you don’t know yet what it is. It’s about narrowing down the scope of the search and iterating again and again. This takes a great deal of time.”

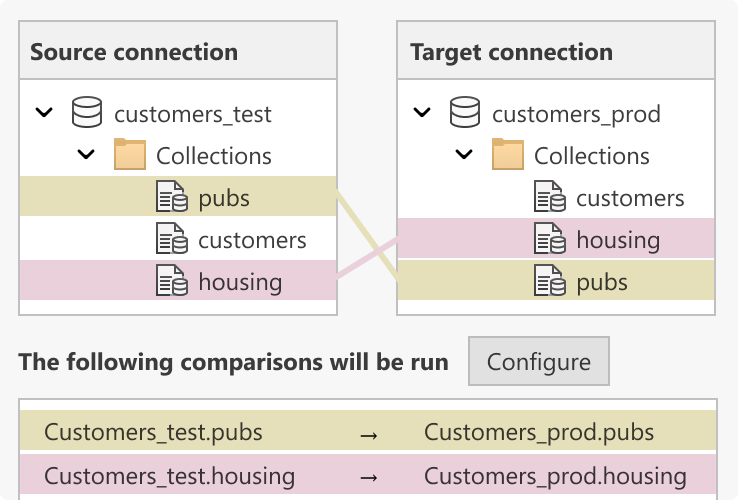

“We also use Data Compare and Sync on a regular cycle, for bringing down production data to provision development and testing environments with the most realistic data. We use asynchronous encryption to ensure obfuscation of the production data before it is restored to development. This is another huge time saving feature. Testing and QA in particular rely on having the most up-to-date and realistic data to work with. DCAS makes it so.”

4. Find easier ways to iterate

“Also, using the Visual Query Builder to visualize the metadata, this is essential for us to verify how our code works for different types of clients. Having a good visual tool for comparing environments, and also for quickly looking it up, checking what conditions we’ve set for this process and then running it again, and again, this is essential for us. For repetitive, meticulous work on code, a team depends on its tools.”

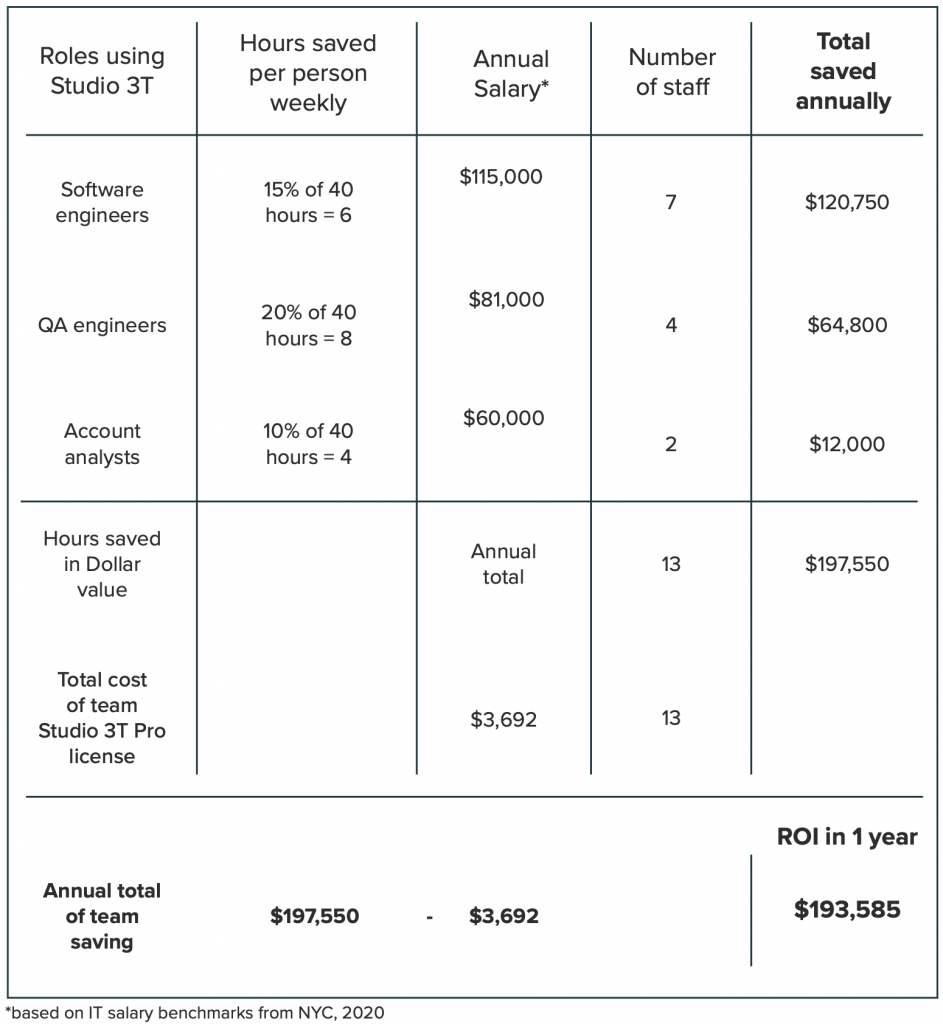

“So, like I say, conservatively, 15% of the developers’ time is saved. For QA a little more, say 20%.”

5. Make the numbers work

An ROI calculation for the Datadock Solutions team using Studio 3T – Pro Edition.

Conclusion

CTO Martin Adamec is clear that the GUI/IDE is used for a range of different time-consuming essentials. All are business critical and Studio 3T saves time with all of them:

- Checking consistency across instances from Dev to Staging, QA and Production

- Debugging application code by verifying changes in data outputs

- Iterating queries with multiple variant conditions

- Exploring and identifying non-standard data

- Using Data Compare and Sync to keep multiple environments in alignment

- Visualizing metadata and analyzing logs

- Copying and pasting collections between databases

- Editing documents in place